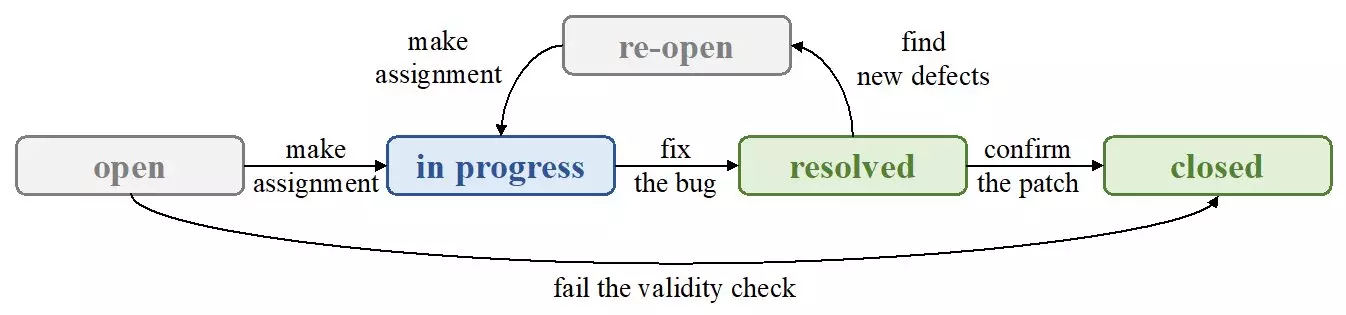

In the rapidly evolving software engineering landscape, the challenge of bug assignment remains a critical concern. For years, engineers have relied on textual bug reports, which detail the nature of bugs and potential causes, to guide their troubleshooting efforts. Researchers have poured significant resources into automating this process, leveraging Natural Language Processing (NLP) techniques to parse these reports effectively. However, despite advancements, an insidious issue persists: the noise inherent in textual data often undermines the reliability of automated bug assignment systems.

The Shortcomings of Textual Analysis

A recent study conducted by a research team led by Zexuan Li highlights the inadequacies of classical NLP methods in bug assignment. By employing TextCNN, an advanced NLP technique, the researchers sought to determine whether improved processing could enhance the effectiveness of textual features in identifying buggy files. Surprisingly, the findings revealed that textual features did not outperform their counterparts, indicating a significant hurdle in the application of NLP for bug assignments. This presents an intriguing question: why do textual features fall short in a domain where language should theoretically provide clarity?

The intricate nature of software bugs often means that textual descriptions are replete with ambiguity and variances in language that complicate understanding. The research posits that nominal features—quantitative indicators that reflect developer preferences—offer a more robust alternative. Such features have emerged as critical metrics in bug assignment methodologies, indicating that perhaps the key to better performance lies not in parsing language more adeptly, but in leveraging more straightforward, structured data.

The Significance of Nominal Features

In their exploration, Li’s team examined influential features within bug assignment paradigms, utilizing a statistical lens to assess their efficacy. By adopting a wrapper method in conjunction with a bidirectional strategy, the researchers could iteratively train classifiers, systematically judging the importance of various features based on performance metrics. Their results illuminated a stark conclusion: nominal features often supersede textual features in effectiveness. This challenges the traditional reliance on textual information and suggests a paradigm shift toward a more data-driven approach.

As the study reported a marked improvement in bug assignment accuracy—ranging from 11% to 25%—it becomes apparent that the software development community must rethink its strategies. The implications are vast; rather than investing energy into refining NLP techniques that may only yield marginal benefits, there lies potential in deepening the understanding of nominal features.

Charting a Path Forward

The implications of this research extend far beyond mere academic curiosity. The insights drawn suggest that a knowledge graph linking source files with descriptive words could pave the way for inventive solutions to enhance bug assignment processes. By incorporating prominent nominal features into machine learning models, developers can harness clearer data pathways, improving bug tracking and resolution significantly. Future research must prioritize these avenues to realize their full potential in combatting software bugs efficiently and effectively.

In essence, we stand at a crossroads in bug assignment methodology; the choice to either cling to traditional textual analyses or boldly embrace the rigor of nominal features could redefine the landscape of software engineering.

Leave a Reply